Feedback Is Not an Opinion. It's a Decision.

Product feedback is everywhere. Useful feedback is rare. Two years building an AI reviewer taught me what separates the signal from the noise.

If you’ve been collaborating with teams to build products for a while, you have probably shared thousands of feedback messages by now. Sometimes ad-hoc, sometimes urgent, sometimes right before a deadline, but certainly always needed.

When I joined onBeacon as a Founding Designer two years ago, I didn’t expect feedback to become the foundation for what I was about to build.

The problem is, we’ve confused feedback with a personal opinion when, in reality, it should help make a decision. So, what does useful feedback actually look like? Turns out, it’s harder to answer than it sounds.

When feedback turns into commentary

Raise your hand if you’ve ever been overwhelmed by feedback. I know I have. Especially early in my career, I thought every piece of feedback was a task to take on. I would come back from a meeting with what felt like a to-do list. Pretty straightforward, right?

What happens when two people with strong opinions have contradictory thoughts? Or when you receive feedback that felt completely wrong, even if you couldn’t put it into words? How do you figure out what actually matters?

That’s the moment when feedback stops feeling valuable and turns into competing voices, with the louder one winning most of the time. If it came from your boss’s boss, it seems like you can’t say no to it. Maybe you’re facing a dilemma with an engineer claiming it’s not doable right after you shared your idea. Or a client who says something isn’t working. They don’t know why. They’ll know it when they see it.

When feedback doesn’t lead to a decision, it’s not doing its job. It’s just adding noise.

What “useful” actually forced me to figure out

When I started building the feedback plugin, the core idea seemed obvious: give it a screen, get feedback on copy, accessibility, and UI decisions. What I didn’t anticipate was how hard it would be to define what “good feedback” actually meant. The real challenge was shaping the feedback itself.

When designers and PMs can just drop their screens inside ChatGPT and ask for feedback, what makes this plugin different? More importantly, what does “useful” look like in product feedback?

That question opened up more exploration than I expected. Some feedback felt too directive. Some too conversational. Some simply unconvincing. The process took a while. User interviews, experiments, and countless iterations, each one shifting how I understood what I was actually trying to build.

Each small improvement made the next one clearer. That’s a pattern I’ve come to recognize as the most critical kind of progress. None of my biggest breakthroughs here could be displayed in a visual design portfolio. It happens at the level of natural language, figuring out how to guide a model toward outputs that actually help someone take a step forward.

There’s no written playbook for this. That makes it the most unexpected part of my job, and the one with the longest-lasting impact. The most rewarding kind.

What good feedback is actually made of

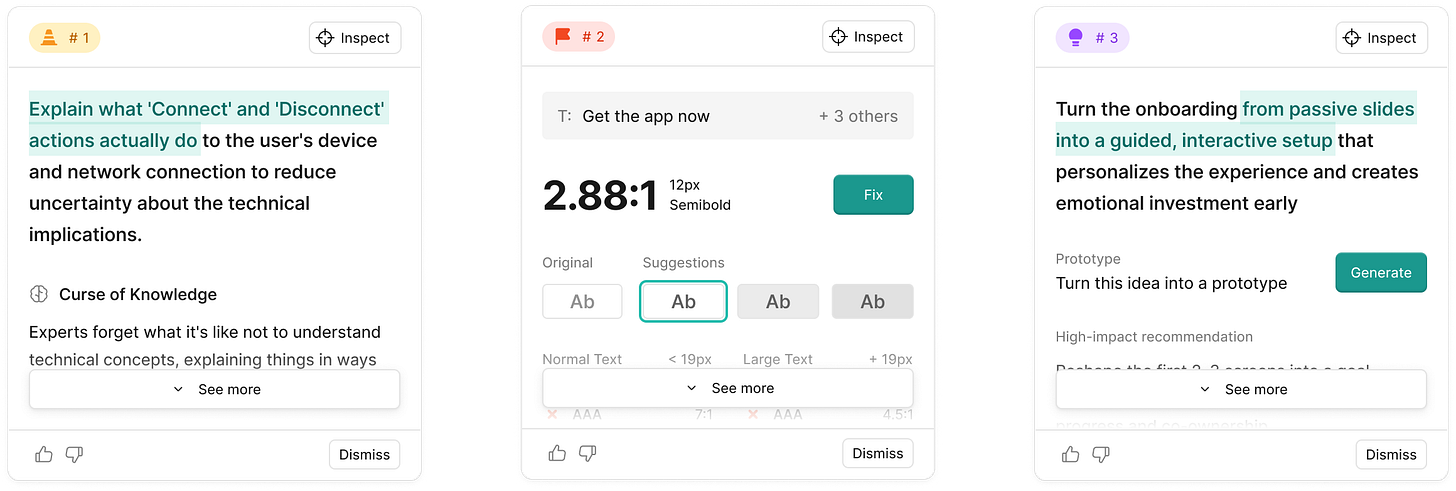

The structure of the feedback turned out to be the first key learning, and the hardest to get right. What started as a simple format of feedback plus rationale evolved into something more layered. Not by design, but by paying attention to how feedback actually gets used.

Good feedback doesn’t bury the lead. It starts with the relevant point in a concise, specific, and accurate manner. The next layer is a suggestion: what to change and how. This segment is all about action.

Below that, supporting evidence. A behavioral principle, a user quote, or a relevant data point that makes the case with tangible proof. Then the rationale for why this matters at all. And if you need to go further, the source material to dig deeper is there.

Not every piece of feedback needs all five layers. A rule-based accessibility issue is different from new ideas for an onboarding flow. The goal of this sequence is progressive disclosure: you spend only as much time on it as the decision requires.

I came across the Minto Pyramid recently, which is a framework for structuring persuasive arguments, and recognized the shape immediately. Lead with the conclusion, then support it. That’s what I’d been building toward without knowing it had a name. The best structures usually come from watching how people actually behave, not from looking for frameworks first.

The part that can’t be automated

When you’re about to ask for feedback from someone who’s not involved in the process, do you just ask them, “What can be better?” I bet not. Structure is the vehicle for feedback. Context is what makes it land.

In the early days, every time I showed someone a generated feedback example, I’d hear the same thing: “This is good, but it’s too generic.” But you know what’s not generic? Their specific context.

A designer reviewing an onboarding flow, a PM reviewing a product spec, or an executive evaluating a high-stakes project share the same underlying questions: Who’s this for? What problem does it solve? What does success look like here? Without those answers, you’re evaluating execution without understanding the goal.

Those are the lenses that make feedback useful, not just correct. Each specific note is now connected to the bigger picture. That’s what’s hard to automate, because it requires judgment about the whole product, not just the piece in front of you. And when humans do it well, it’s irreplaceable.

Getting the product context into the output remains the hardest part. Without it, quality drops. Without quality, there’s no confidence. Without confidence, trust is gone.

This is why quality improvements take priority on our team: even as models become more powerful, cheaper, and capable, the quality of the results will be the real differentiator. That’s the thing about human feedback worth studying: it moves people forward. That’s the bar.

Parting thoughts

I didn’t expect AI to teach me how to assess the quality of feedback. Trying to articulate what I knew forced me to deconstruct things I’d only ever done intuitively. Skills that had become autopilot after years of practice suddenly needed words. Going through thousands of results and trying to put a number on quality will do that to you.

It sharpened my instincts. More than that, it gave me the language to explain what good feedback actually is, something I’d been doing before I could describe it clearly.

The ideal outcome of feedback is simple: the person receiving it should feel confident about what to do next. That sounds obvious. Getting feedback that’s specific, grounded, and trustworthy is not. When someone says, “I’ve got this,” you know you made it.

Shortcuts

How to Build a Truly Useful AI Product, an insightful essay by Chris Pedregal that ignited my bet on quality.

The art of influence, a Lenny’s Podcast episode with Jessica Fain discussing fundamentals to get buy-in for your ideas.

Strategy, not self-expression, a simple framework for giving feedback that drives behavior change.

That’s where curiosity led me this month. Your path will look different, because context always matters.

If this sparked something, let’s continue the conversation on Twitter. And if someone you know is navigating their own creative uncertainty, share this their way.

Keep exploring,

Laura ✌️